Abstract

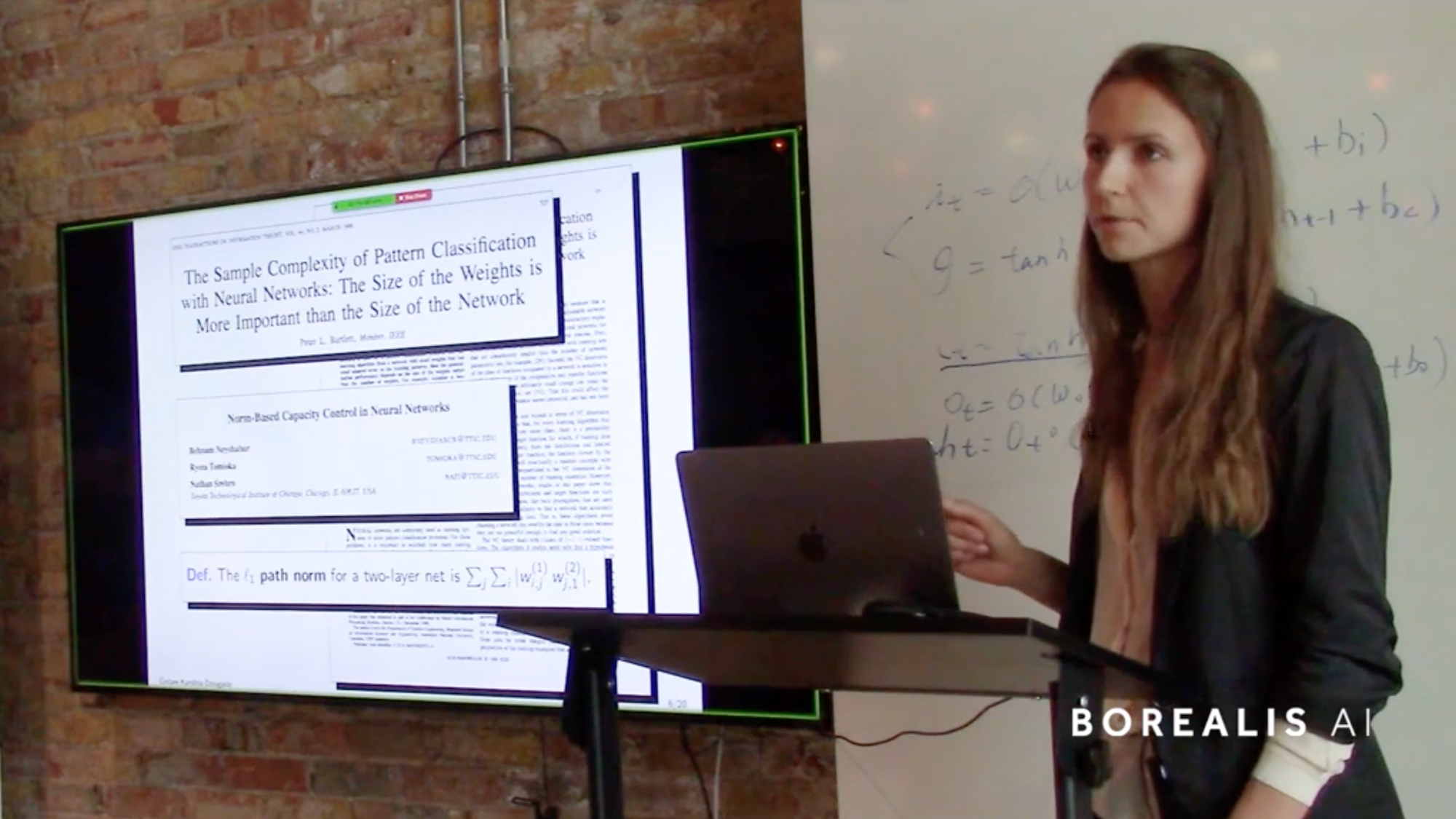

Karolina presents her recent work constructing generalization bounds in order to understand existing learning algorithms and propose new ones. Generalization bounds relate empirical performance to future expected performance. The tightness of these bounds vary widely, and depends on the complexity of the learning task and the amount of data available, but also on how much information the bounds take into consideration. Her work is particularly concerned with data and algorithm-dependent bounds that are quantitatively nonvacuous. She presents bounds built from solutions obtained by stochastic gradient descent (SGD) on MNIST. By formalizing the notion of flat minima using PAC-Bayes generalization bounds, we obtain nonvacuous generalization bounds for stochastic classifiers built by randomly perturbing SGD solutions.

Joint work with Daniel M. Roy based on Computing Nonvacuous Generalization Bounds for Deep (Stochastic) Neural Networks with Many More Parameters than Training Data, Entropy-SGD optimizes the prior of a PAC-Bayes bound: Generalization properties of Entropy-SGD and data-dependent priors, and Data-dependent PAC-Bayes priors via differential privacy.

News

Northern Frontier: In conversation with Prof. Sanja Fidler

News

Northern Frontier: In conversation with Prof. Graham Taylor

News